Rankings are important in American higher education.

Many publications produce lists of the best colleges and universities, and almost every university in the United States asks its students to evaluate their professors. However, universities rarely share this information with students.

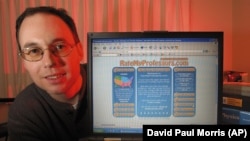

In 1999, a computer programmer designed a website called RateMyProfessors. The site allows students from Canada, Britain and the U.S. to rate their professors on a scale of 1 to 5 in several areas. These areas included clarity, easiness, helpfulness and overall quality. The site also let students rate the professors’ physical appeal.

As of December 2016, RateMyProfessors listed about 17 million ratings of more than 1.6 million professors from over 7,000 schools. However, last May, the site removed clarity and helpfulness as individual qualities to be rated.

Universities rarely, if ever, use RateMyProfessors ratings to evaluate the performance of a professor. Students may find them useful. However, this system of evaluation does present problems.

Andrew Rosen, a graduate student studying chemical engineering at Northwestern University outside of Chicago, says he has used RateMyProfessors in the past. He likes that students can list comments about professors to give more information than just a rating.

But, Rosen says he's noticed a sharp gender disparity in such ratings.

So he designed a computer program to study the almost 8 million ratings of professors at U.S. universities. He says no one had ever done a study of this kind. The academic publication Assessment and Evaluation in Higher Education published the findings in January.

Rosen looked at the differences in average overall quality scores between professors in different areas of study. He also looked at the differences in average overall quality scores between male and female professors.

"So across all of the disciplines on the site, there’s not one discipline where females professors score higher than male professors," Rosen said. "And, of course, by that I don’t mean to imply that female professors may not be as good professors as male professors. That’s not true at all. What I do mean is that students that submit these reviews may have gender biases against or for certain professors."

Rosen says students may not realize they are consistently rating women lower than men. But he says the study shows that subconscious bias among students affects their judgement. And, he says, gender is not the only thing students are considering.

Rosen found that professors with higher average scores for “easy” classes also had higher average overall quality scores. Overall quality scores were also higher for professors rated as good looking.

There were also major differences in how students view academic fields. Professors of mathematics and science often had lower ratings than those teaching art or language. For example, the average overall quality rating of physics professors is 3.4 out of 5. The average overall quality rating of foreign language professors is 4.

Rosen suggests this may be because professors in some fields have more experience in research than in teaching. But the rating process could also be affected by student expectations as to how professors of certain subjects should look and act.

"Some disciplines probably have different gender stereotypes. The stereotypical image of a scientist is kind of like a white male in a lab coat with a beaker, right? So, if you have a professor that doesn’t fit that mold, perhaps these gender stereotypes … are causing these differences."

RateMyProfessors chose not to speak with VOA for this story. But other experts in higher education also argue these types of rating methods have problems.

Philip Stark, a statistics professor at the University of California at Berkeley, and his research partner, Anne Boring, also published a study of student evaluations of professors.

They looked at about 23,000 university-operated student evaluations of 379 professors in France. They also looked at a U.S. study of college student evaluations of an online class. Students in that class had never met their professors nor even learned their names.

Two classes secretly had a male teacher and two other classes had a female teacher.

Stark and Boring found the French students and the U.S. students both favored male professors in their ratings. And in the online classes, the students gave lower ratings when they thought their teacher was a woman. This happened despite test results showing students performed better in the classes taught by the female.

Stark says universities began about 30 years ago to use their own student evaluation systems in employment decisions. He says it proved an easy and low-cost way of measuring teacher effectiveness.

But, he says it may not be fair to judge all professors in the same way.

"It gives students a voice … It makes it really easy on administrators to rank people," Stark said. "The problem is that it may be giving students a voice in the wrong way, or we may be misinterpreting exactly what the voices are able to judge well. And in making it easy for the university, the administration to do their job, that doesn’t mean that they’re doing a good job as a result."

Stark says there is no proof that male professors perform better than females. And students may not fully understand the usefulness of some material over others and so may not be fit to judge.

He suggests administrators should instead take care when evaluating teaching ability. They should consider the time and effort professors put into teaching in and outside their classes.

That way professors can be rated on their level of commitment, and not just by student opinion.